It’s evident from the quantity of stories protection, articles, blogs, and water cooler tales that synthetic intelligence (AI) and machine studying (ML) are altering our society in basic methods—and that the {industry} is evolving shortly to attempt to sustain with the explosive progress.

Sadly, the community that we’ve used prior to now for high-performance computing (HPC) can’t scale to fulfill the calls for of AI/ML. As an {industry}, we should evolve our pondering and construct a scalable and sustainable community for AI/ML.

In the present day, the {industry} is fragmented between AI/ML networks constructed round 4 distinctive architectures: InfiniBand, Ethernet, telemetry assisted Ethernet, and totally scheduled materials.

Every know-how has its execs and cons, and numerous tier 1 net scalers view the trade-offs otherwise. That is why we see the {industry} shifting in lots of instructions concurrently to fulfill the fast large-scale buildouts occurring now.

This actuality is on the coronary heart of the worth proposition of Cisco Silicon One.

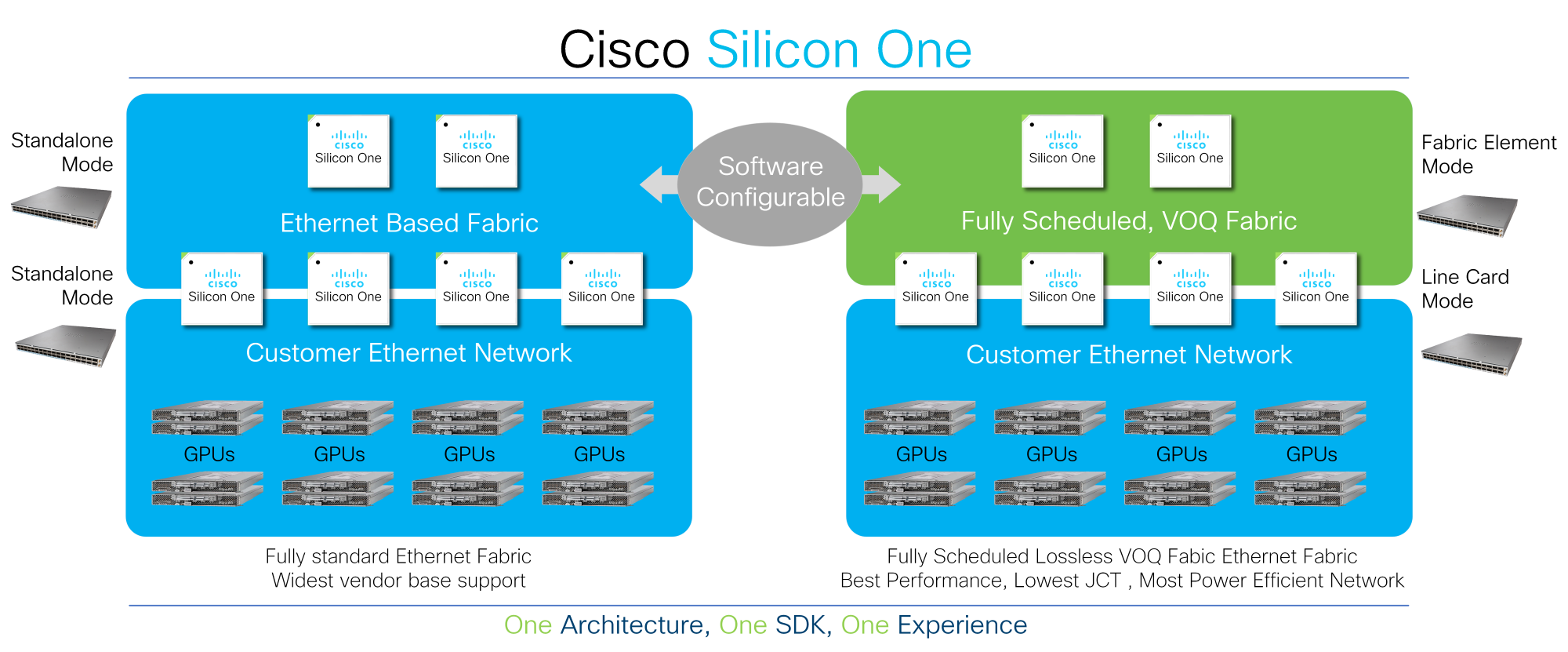

Clients can deploy Cisco Silicon One to energy their AI/ML networks and configure the community to make use of commonplace Ethernet, telemetry assisted Ethernet, or totally scheduled materials. As workloads evolve, they will proceed to evolve their pondering with Cisco Silicon One’s programmable structure.

All different silicon architectures in the marketplace lock organizations right into a slender deployment mannequin, forcing clients to make early shopping for time choices and limiting their flexibility to evolve. Cisco Silicon One, nonetheless, provides clients the pliability to program their community into numerous operational modes and gives best-of-breed traits in every mode. As a result of Cisco Silicon One can allow a number of architectures, clients can concentrate on the fact of the info after which make data-driven choices in response to their very own standards.

To assist perceive the relative deserves of every of those applied sciences, it’s essential to know the basics of AI/ML. Like many buzzwords, AI/ML is an oversimplification of many distinctive applied sciences, use circumstances, site visitors patterns, and necessities. To simplify the dialogue, we’ll concentrate on two facets: coaching clusters and inference clusters.

Coaching clusters are designed to create a mannequin utilizing recognized information. These clusters practice the mannequin. That is an extremely advanced iterative algorithm that’s run throughout an enormous variety of GPUs and might run for a lot of months to generate a brand new mannequin.

Inference clusters, in the meantime, take a educated mannequin to research unknown information and infer the reply. Merely put, these clusters infer what the unknown information is with an already educated mannequin. Inference clusters are a lot smaller computational fashions. Once we work together with OpenAI’s ChatGPT, or Google Bard, we’re interacting with the inference fashions. These fashions are a results of a really vital coaching of the mannequin with billions and even trillions of parameters over an extended time frame.

On this weblog, we’ll concentrate on coaching clusters and analyze how the efficiency of Ethernet, telemetry assisted Ethernet, and totally scheduled materials behave. I shared additional particulars about this matter in my OCP International Summit, October 2022 presentation.

AI/ML coaching networks are constructed as self-contained, huge back-end networks and have considerably totally different site visitors patterns than conventional front-end networks. These back-end networks are used to hold specialised site visitors between specialised endpoints. Previously, they have been used for storage interconnect, nonetheless, with the arrival of distant direct reminiscence entry (RDMA) and RDMA over Converged Ethernet (RoCE), a good portion of storage networks are actually constructed over generic Ethernet.

In the present day, these back-end networks are getting used for HPC and big AI/ML coaching clusters. As we noticed with storage, we’re witnessing a migration away from legacy protocols.

The AI/ML coaching clusters have distinctive site visitors patterns in comparison with conventional front-end networks. The GPUs can totally saturate high-bandwidth hyperlinks as they ship the outcomes of their computations to their friends in a knowledge switch often known as the all-to-all collective. On the finish of this switch, a barrier operation ensures that each one GPUs are updated. This creates a synchronization occasion within the community that causes GPUs to be idled, ready for the slowest path via the community to finish. The job completion time (JCT) measures the efficiency of the community to make sure all paths are performing effectively.

This site visitors is non-blocking and ends in synchronous, high-bandwidth, long-lived flows. It’s vastly totally different from the info patterns within the front-end community, that are primarily constructed out of many asynchronous, small-bandwidth, and short-lived flows, with some bigger asynchronous long-lived flows for storage. These variations together with the significance of the JCT imply community efficiency is vital.

To investigate how these networks carry out, we created a mannequin of a small coaching cluster with 256 GPUs, eight high of rack (TOR) switches, and 4 backbone switches. We then used an all-to-all collective to switch a 64 MB collective dimension and fluctuate the variety of simultaneous jobs working on the community, in addition to the quantity of community within the speedup.

The outcomes of the examine are dramatic.

Not like HPC, which was designed for a single job, massive AI/ML coaching clusters are designed to run a number of simultaneous jobs, equally to what occurs in net scale information facilities as we speak. Because the variety of jobs will increase, the results of the load balancing scheme used within the community grow to be extra obvious. With 16 jobs working throughout the 256 GPUs, a totally scheduled cloth ends in a 1.9x faster JCT.

Learning the info one other method, if we monitor the quantity of precedence circulation management (PFC) despatched from the community to the GPU, we see that 5% of the GPUs decelerate the remaining 95% of the GPUs. Compared, a totally scheduled cloth gives totally non-blocking efficiency, and the community by no means pauses the GPU.

Which means that for a similar community, you’ll be able to join twice as many GPUs for a similar dimension community with totally scheduled cloth. The objective of telemetry assisted Ethernet is to enhance the efficiency of ordinary Ethernet by signaling congestion and enhancing load balancing choices.

As I discussed earlier, the relative deserves of assorted applied sciences fluctuate by every buyer and are possible not fixed over time. I imagine Ethernet, or telemetry assisted Ethernet, though decrease efficiency than totally scheduled materials, are an extremely useful know-how and can be deployed extensively in AI/ML networks.

So why would clients select one know-how over the opposite?

Clients who wish to benefit from the heavy funding, open requirements, and favorable cost-bandwidth dynamics of Ethernet ought to deploy Ethernet for AI/ML networks. They’ll enhance the efficiency by investing in telemetry and minimizing community load via cautious placement of AI jobs on the infrastructure.

Clients who wish to benefit from the full non-blocking efficiency of an ingress digital output queue (VOQ), totally scheduled, spray and re-order cloth, leading to a powerful 1.9x higher job completion time, ought to deploy totally scheduled materials for AI/ML networks. Totally scheduled materials are additionally nice for purchasers who wish to save price and energy by eradicating community components, but nonetheless obtain the identical efficiency as Ethernet, with 2x extra compute for a similar community.

Cisco Silicon One is uniquely positioned to supply an answer for both of those clients with a converged structure and industry-leading efficiency.

Study extra:

Learn: AI/ML white paper

Go to: Cisco Silicon One

Share: